Bridging industry-specific CRMs

From modern REST APIs to legacy FTP servers—Learn how Elixir « behaviours » helped us connect multiple CRMs to our auction platform.

21 January 2025 – Goulven CLEC'H

Business context and need

Enchères Immo is a real estate auction platform where I’ve worked since 2020, starting as an intern and now as software lead. Our clients are real estate professionals who want to sell properties through online auctions. In a difficult period for the real estate market, we aim to help buyers and sellers find the best price quicker and transparently.

Elixir and Phoenix power our platform, from back-end to front-end,1 and their reliability and scalability are key to our success. One of my 2025 goals is to share more about our achievements and challenges with Elixir.

One key feature professionals asked for was the ability to import their properties. Most of them are used to spending time creating their properties and listings once in their favourite Customer Relationship Management software (CRM) and being able to export them to other platforms in one click.2 Having to re-enter everything manually was a massive pain point for them, and none of our competitors offered a solution.

More than a way to attract new clients, linking our platform to those important actors in the real estate market has sometimes been the first step to a more in-depth collaboration. Integrating a technical ecosystem can be a way to integrate a business ecosystem ;)

However, the problem with industry-specific CRMs is the lack of standardisation. Each has its own API (from modern REST APIs to legacy FTP servers) with different data structures, formats (JSON, XML, CSV), and documentation (or lack of). We had to support them all without having to rewrite the whole import process for each new CRM, duplicating code and reducing maintainability…

What’s a behaviour?

In other programming languages, you might have encountered interfaces (Java, C#), protocols (Clojure, Swift), traits (Rust), or abstract classes (Python, C++). In Elixir, we have « behaviours ».

A behaviour defines a set of functions a module must implement. It enforces consistency across modules while enabling polymorphism.3

We start defining a behaviour with the @callback directive, which specifies the functions that must be implemented. Here, greet/1 is the required function, which must take a string (name) and return another string:

defmodule GreeterBehaviour do @callback greet(name :: String.t()) :: String.t()endTo use this behaviour, we create modules that call the @behaviour directive and implement all the specified functions:@impl true annotation is optional but highly recommended. It ensures you’re explicitly implementing a behaviour-defined function.

defmodule EnglishGreeter do @behaviour GreeterBehaviour

@impl true def greet(name), do: "Hello, #{name}!"end

defmodule FrenchGreeter do @behaviour GreeterBehaviour

@impl true def greet(name), do: "Bonjour, #{name}!"endFrom there, we can call any module implementing the GreeterBehaviour behaviour without knowing its implementation details:

defmodule GreetingService do def send_greeting(module, name) when is_atom(module) do module.greet(name) endend

GreetingService.send_greeting(EnglishGreeter, "Alice")# "Hello, Alice!"

GreetingService.send_greeting(FrenchGreeter, "Alice")# "Bonjour, Alice!"While, contrary to those examples, behaviours are not the proper way to internationalise your application, they are a powerful tool when polymorphism is needed… Like dealing with multiple industry-specific CRMs!

How we used behaviours

Let me introduce you to the final architecture of our CRM integrations feature:

In this diagram, CrmIntegrations is the feature context module,4 with public functions like create_crm_integration and sync_crm_integration. CrmIntegration is the schema module, representing the integration between an Organization and its CRM.CrmJob is an Oban worker module, responsible for running the import process asynchronously.

To avoid duplications and ensure maintainability, we contain CRM-agnostic utilities in other modules: Filtering contains functions to prevent importing duplicate properties; Persistence is the module responsible for saving the properties in the database; and Notifier is a realtime Publisher/Subscriber service that informs the user interfaces of the progress and errors in a human-readable way.

Finally, CRM modules implement the Crm behaviour, which required functions implementing the CRM-specific logic:

defmodule EncheresImmo.CrmIntegrations.Crm do @moduledoc """ This behaviour module provides functions to transform CRM-specific datas into Property changesets ready to be sent to Ecto. """

# Aliases and imports...

@doc """ Get a list of raw properties from the CRM """ @callback get_raw_properties(str :: map) :: list

@doc """ Parse a raw property from the CRM into a Property changeset ready to be sent to Ecto. """ @callback convert_property_map( property :: map, agent_id :: binary, integration :: CrmIntegration.t() ) :: map

@doc """ Get a unique identifier for a property. """ @callback get_source_id(property :: map) :: binary

@doc """ CRM codes are used as keys. This list is used as single source of truth in many parts of the code. """ @crms %{ "apimo" => %{type: :api, module: Apimo, name: "Apimo"}, "hektor" => %{type: :xml, module: Hektor, name: "Hektor"}, "twimmo" => %{type: :csv, module: Twimmo, name: "Twimmo"} # Other CRMs... }

# Some utility functions to work with the @crms map...endFor example, here an extract of the Twimmo module, implementing convert_property_map/3 to parse CSV raw data into a Property changeset:

defmodule EncheresImmo.CrmIntegrations.Twimmo do @moduledoc """ Module for handling Twimmo's CSV files, dropped in our FTP server. Format specification: SeLoger411.pdf """ @behaviour EncheresImmo.CrmIntegrations.Crm

# Aliases and imports...

# get_raw_properties/1

@impl true def convert_property_map(property, agent_id, integration) do photos = convert_pictures(property) agent_id = Crm.get_agent_id(integration, get_field(property, 106), agent_id) id = get_source_id(property) name = get_field(property, 19) |> :unicode.characters_to_binary(:latin1, :utf8) description = get_field(property, 20) |> :unicode.characters_to_binary(:latin1, :utf8)

%Property{ original_name: name, original_description: description, area: option(get_field(property, 15), 0) |> parse_integer(), # Other fields... crm_integration: %{ source_id: id, source: integration.source, source_agency_id: integration.source_agency_id }, } end

@impl true def get_source_id(property) do "twimmo_#{get_field(property, 1)}" end

# CSV doesn't have keys, so we have to rely on the column order defp get_field(property, index), do: Enum.at(property, index) |> clean_field()

defp get_certificate(value) when value in ["A", "B", "C", "D", "E", "F", "G"], do: value defp get_certificate(_), do: "N/A"

# Other utility functions...endThanks to behaviours, we can focus on the parsing logic with almost no boilerplate code. The last step is to implement get_raw_properties with the CRM-specific data retrieval logic…

Sources and formats

Modern CRMs, like Apimo, use REST APIs.5 It’s the best-case scenario, as we can use req to make standard HTTP requests, and use its built-in JSON parser.

Example of a get_raw_properties implementation for a REST API:

@impl truedef get_raw_properties(agency_id) do case api_request(properties_endpoint(agency_id)) do {:ok, %Req.Response{body: body}} -> body["properties"] || [] error -> CrmIntegrationNotifier.error(org_id) Logger.error("#{__MODULE__}: #{inspect(error)}") error endend

defp api_request(endpoint) do Req.new(base_url: @base_url) |> Req.get( url: endpoint, headers: %{Authorization: "Basic " <> Base.encode64("#{api_provider()}:#{api_token()}")} )endSadly, the real estate world is not at the forefront of technology, and most CRMs still use FTP servers to exchange data. This means running a server on our side, managing access for each CRM, and parsing the files they drop in. Also, a user has to wait for its CRM to send the file, which can take up to 24 hours…6

We use ![]() Greg Sanderson‘s custom client for Fly.io, build on top of alpine-ftp-server and vsftpd. Accesses are managed by a environment variable, with a folder per CRM. We put all related utilities, like

Greg Sanderson‘s custom client for Fly.io, build on top of alpine-ftp-server and vsftpd. Accesses are managed by a environment variable, with a folder per CRM. We put all related utilities, like FTP.get_files/2, in a FTP module.7

Here is an extract of the Twimmo module, implementing get_raw_properties:

@impl truedef get_raw_properties(agency_id) do with {:ok, files} <- FTP.get_files("twimmo", agency_id), file <- Enum.find(files, fn file -> file == "Annonces.csv" end), {:ok, content} <- File.read(file) do content |> SeLogerParser.parse_string() else error -> CrmIntegrationNotifier.error(org_id) Logger.error("#{__MODULE__}: #{inspect(error)}") error endend

NimbleCSV.define(SeLogerParser, separator: "!#", escape: "\"", newlines: ["\r\n"], moduledoc: """ (... Specification details) """)In this example, we use NimbleCSV to parse CSV files, a simple library that allows you to define multiple parsers to handle different CSV formats.8 Alternatively, we also use sweet-xml to parse XML files.

!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#"235922"!#"0"!#""!#"0"!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#"NON"!#"NON"!#""!#"NON"!#""!#""!#""!#""!#"NON"!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#"NON"!#"NON"!#""!#""!#""!#"NON"!#""!#""!#""!#""!#""!#""!#"NON"!#"0"!#"0"!#"NON"!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#""!#"0"!#"0"!#""!#"0"!#"0"!#"1"!#"4.08-006"!#""8 – Extract from a SeLoger-compliant CSV file. Probably for someone’s job security, they use a custom !#"" delimiter, and OUI/NON for booleans. And since the format lacks keys, you have to rely on the column order, hence all the empty and NON values…

And that’s it! We can import properties from any CRM with minimal effort, from REST APIs to FTP servers, with different data structures and formats.

User interface and error handling

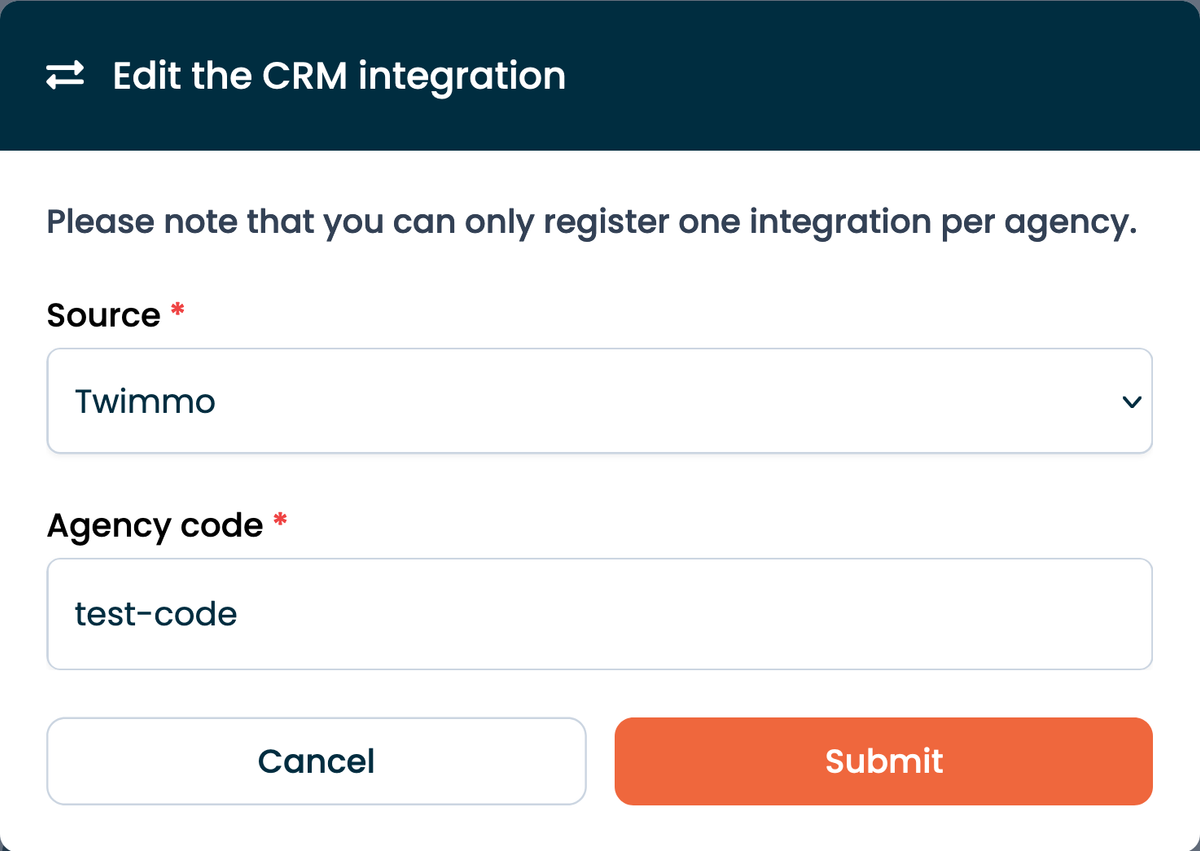

Thanks to our unified architecture, we can provide a consistent user experience across all CRMs. Therefore, a manager or the owner of an organization can create a new integration in a few clicks, by selecting the CRM and entering its « agency code ».9

A button « Import from (source name) » appears on the properties page, allowing users to trigger the import manually. The import process runs asynchronously and, as it can take a while, the user interface shows a loading spinner and a message indicating the progress.10

This is powered by the CrmIntegrationNotifier module, which uses Phoenix.PubSub to broadcast messages to the user interfaces. During the import process, we can tell the user how many properties have been imported, how many are left, and what’s the last property processed:

CrmIntegrationNotifier.progress( integration.organization_id, integration.source, imported_count, total_count, property_id,)Liveview components can then subscribe to the CrmIntegrationNotifier channel and, in our case, update the progress message and the properties list in real time:

def mount(_params, session, socket) do CrmIntegrationNotifier.subscribe(org_id) # Other initializations...end

def handle_info( {CrmIntegrationNotifier, :progress, %{ imported: imported, total: total, last_inserted_property_id: _last_inserted_property_id, source: source }}, socket ) do # Update the progress message crm_import_status = %{ "state" => "loading", "message" => crm_import_on_going_message(imported, total, source) } socket = assign(socket, crm_import_status: crm_import_status) # Update the properties list new_property = Properties.get_property!(last_inserted_property_id, nil) socket = stream_insert(socket, :properties, new_property, at: 0) {:noreply, socket}endThis can also be used to display the success or failure of the import:

@impl truedef handle_info({CrmIntegrationNotifier, :error, _data}, socket) do crm_import_status = %{"state" => "error", "message" => crm_import_error_message()} {:noreply, assign(socket, crm_import_status: crm_import_status)}end

def handle_info({CrmIntegrationNotifier, :success, %{total: total, source: source}}, socket) do crm_import_status = %{ "state" => "success", "message" => crm_import_success_message(total, source) }

{:noreply, assign(socket, crm_import_status: crm_import_status)}endAs user can’t do a lot in case of error,11 we provide a simple message suggesting that they contact us. But on our side, we log the error at the same time. As seen previously:

error -> CrmIntegrationNotifier.error(org_id) Logger.error("#{__MODULE__}: #{inspect(error)}") errorThis log is then sent to our AppSignal instance, where we can track the errors and see if they are recurrent. We also have a dedicated Slack channel where we receive notifications for each error, so we can react quickly if needed. Thus, we often inform the customer success team before the user reaches out.

Testing

A critical advantage of using behaviours is that they make testing easier and more reliable—especially when combined with Mox, which leverages behaviours to easily mock dependencies.

To integrate Mox, we first declare a mock module in our test setup:

Mox.defmock(EncheresImmo.CrmIntegrations.CrmMock, for: EncheresImmo.CrmIntegrations.Crm)Then, we inject this mock into the components we want to test. Here’s a simplified example of how we could test our CrmJob module:

test "minimal import properties test", %{integration: integration} do # Change the schema to match the CRM documentation, and its format raw_properties = [ %{ "id" => "123", "name" => "Test Property", # Other fields... } ]

# Set expectations for our mock EncheresImmo.CrmIntegrations.CrmMock |> expect(:get_raw_properties, fn _agency_id -> end) |> expect(:convert_property_map, fn _raw_property, _agent_id, _integration -> %Property{original_name: "Test Property"} end) |> expect(:get_source_id, fn _property -> "crm_123" end)

# Inject our mock into the job assert :ok = CrmJob.perform(%Oban.Job{args: %{integration_id: integration.id}}, EncheresImmo.CrmIntegrations.CrmMock)

# Then check if the property was created correctly...endWith this approach, each behaviour implementation is tested independently from the rest of the application. We don’t make real API requests or FTP file retrievals, meaning our tests are not only faster but also significantly more robust.

This isolation is particularly beneficial for Test-Driven Development (TDD), as it allows us to define clear contracts upfront, iterate quickly, and incrementally implement and refine the corresponding modules.

Conclusion

Our world is spooky, filled with legacy systems and non-standard data structures… But our CRM integration feature has been up and running for almost 3 years, with multiple refactors from different team members, eight different CRMs supported, thousands of properties imported, and many happy users.

This real case study shows that Elixir behaviours are a powerful tool to handle polymorphism and ensure maintainability, even in a complex feature. If we need to add a new CRM tomorrow, we can do it in a few hours, with minimal effort and no risk of breaking the existing ones.

I hope this entry will help you handle different data sources in your projects. The hardest part is often obtaining the documentation and testing files from the providers… But that’s another story!